Understanding Service Status

A service instance is the combination of a Highly Available cluster service and a cluster node that that service is eligible to run on. For example, in a 2-node cluster each service will be configured to have two available instances - one on each node in the cluster.

Each service instance has its own state, consisting of three items:

Pool State, Failover Mode and Blocked State

Pool State

A service instance in the cluster will always be in a specific

state. These states are divided into two main groups, active states

and inactive states1. Individual states within these groups are

transitional, so for example, a starting state will transition to a

running state once the startup steps for that service have completed

successfully, and similarly a stopping state will transition to a

stopped state once all the shutdown steps have completed

successfully (note that this state change stopping==>stopped also

moves the service instance from the active state group to the inactive

state group).

The following describes the individual states a service instance can be in.

Active States

When the service instance is in an active state, it will be

utilising the resources of that service (e.g. an imported ZFS pool, a plumbed

in VIP etc.). In this state the service is considered up and running and

will not be started on any other node in the cluster until it

transitions to a stopped state (for example, if

a service is stopping on a node it is still in an active state, and

cannot yet be started on any other node in the cluster until it

transitions to a inactive, stopped or broken_safe2 state).

Active states are:

starting

The service is in the process of starting on this

node. Service start scripts are currently running - when they complete

successfully the service instance will transition to the running state.

running

The service is running on this node and only this node. All service resources have been brought online. For ZFS clusters this means the main ZFS pool and any additional pools have been imported, any VIPs have been plumbed in and any configured logical units have been brought online.

stopping

The service is in the process of stopping on this

node. Service stop scripts are currently running - when they complete

successfully the service instance will transition to the stopped state.

broken_unsafe

The service has transitioned to a broken state because service stop or abort scripts failed to run successfully. Some or all service resources are likely to be online so it is not safe for the cluster to start another instance of this service on another node.

This state is caused by one of two circumstances:

-

The service failed to stop - for example, a zpool imported as part of the service startup failed to export during shutdown, or the cluster was unable to unplumb a VIP associated with the service, etc.

-

The service failed to start and abort scripts were run in order to undo any possible actions performed during service startup (for example if a zpool was imported during the start phase then the abort scrips will attempt to export that pool). However, during the abort process one of the abort actions failed and therefore the cluster was unable to shut the service down cleanly.

panicking

While the service was in an active state on this node, it

was seen in an active state on another node. Panic scripts are running

and when they are finished, the service instance will transition to

panicked.

panicked

While the service was in an active state on this node, it was seen in an active state on another node. Panic scripts have been run.

aborting

Service start scripts failed to complete

successfully. Abort scripts are running (these are the same as service

stop scripts). When abort scripts complete successfully the service

instance will transition to the broken_safe state (an inactive

state). If any of the abort scripts fail to run successfully then the

service transitions to a broken_unsafe state and manual intervention

is required.

Inactive States

When a service instance is in an inactive state, no service resources are online. That means it is safe for another instance of the service to be started elsewhere in the cluster.

Inactive states are:

stopped

The service is stopped on this node. No service resources are online.

broken_safe

This state can be the result of either of the following circumstances:

-

The service failed to start on this node but had not yet brought any service resources online. It transitioned directly to

broken_safewhen it failed. -

The service failed to start after having brought some resources online. Abort scripts were run to take the resources back offline and those abort scripts finished successfully.

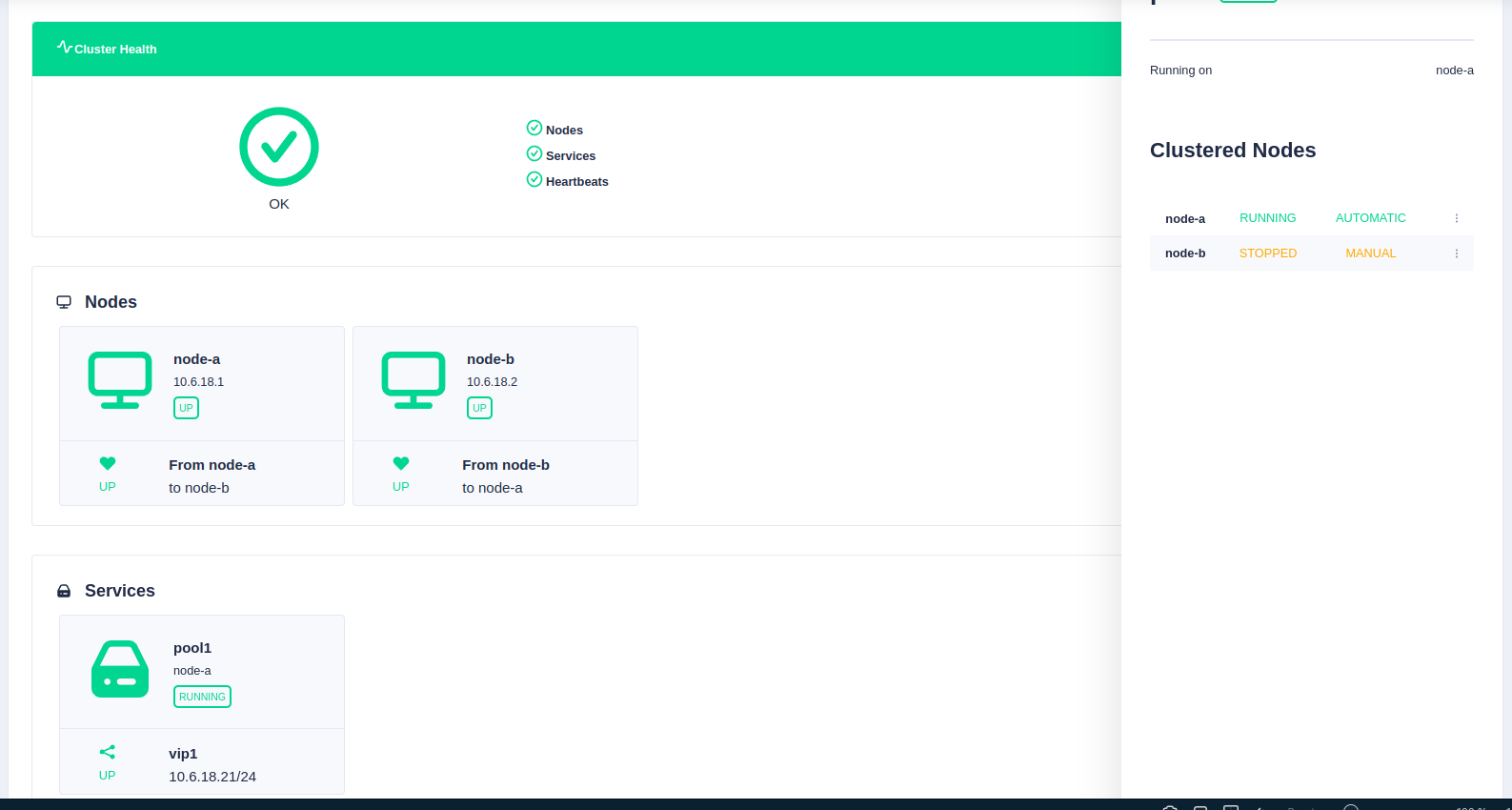

Mode

Each service instance has a mode setting of either automatic or

manual. The mode of a service is specific to each node in the

cluster, so a service can be manual on one node and automatic on

another. The meaning of the modes are:

automatic

Automatic mode means the service instance will be automatically started when all of the following requirements are satisfied:

- The service instance is in the stopped state

- The service instance is not blocked

- No other instance of this service is in an active state

manual

Manual mode means the service instance will never be automatically started.

Blocked

The service blocked state is similar to the service mode except that instead of being set by the user, it is controlled automatically by the cluster's monitoring features.

For example if network monitoring is enabled then the cluster constantly checks the state of the network connectivity of any interfaces VIP's are plumbed in on. If one of those interfaces becomes unavailable (link down, cable unplugged, switch dies etc.) then the cluster will automatically transition that service instance to blocked. Furthermore, a service does not have to be running on a node for that service instance to become blocked - if a network interface becomes unavailable then being in a blocked state means that the cluster is preventing a service failover to a node that cannot run that service successfully.

A service instance blocked state can be either:

blocked

- The cluster's monitoring has detected a problem that affects this service instance.

-

This service instance will not start until the problem is resolved, even if the service is in

automaticmode. -

If a service instance becomes blocked when it is already running, the cluster may decide to stop that instance to allow it to be started on another node. This will only happen if there is another service instance in the cluster that is

unblocked,automaticandstopped.

unblocked

- The service instance is free to start as long as it is in

automaticmode.

-

For example

runningandstoppingare members of the active group, whereasstoppedis a member of the inactive group. ↩ -

A

broken_safestate is considered a stopped state as, althought the service was unable to start up successfully, it was able to free up all the resources during the shutdown/abort step (hence thesafestate). ↩